ConTact Framework

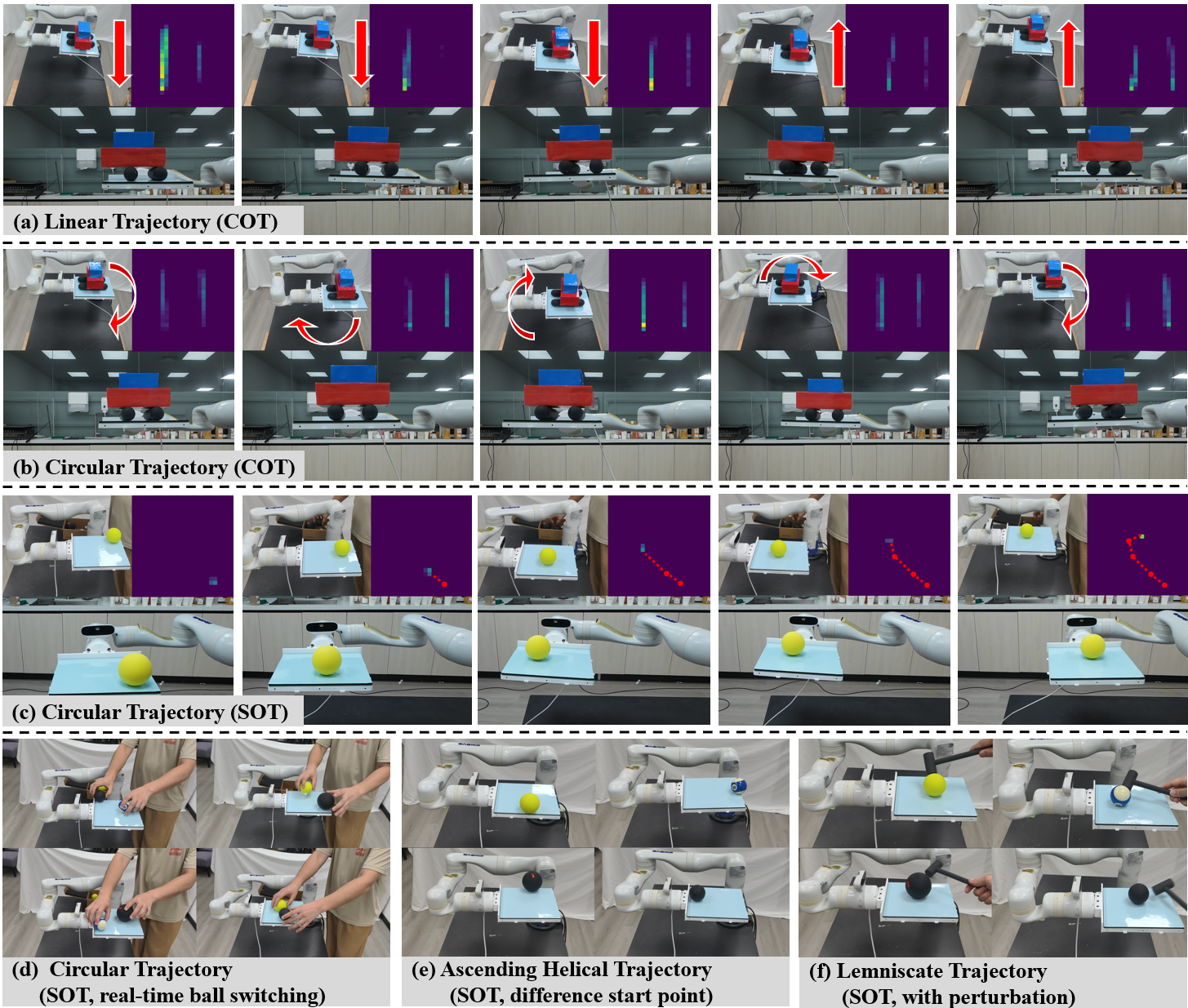

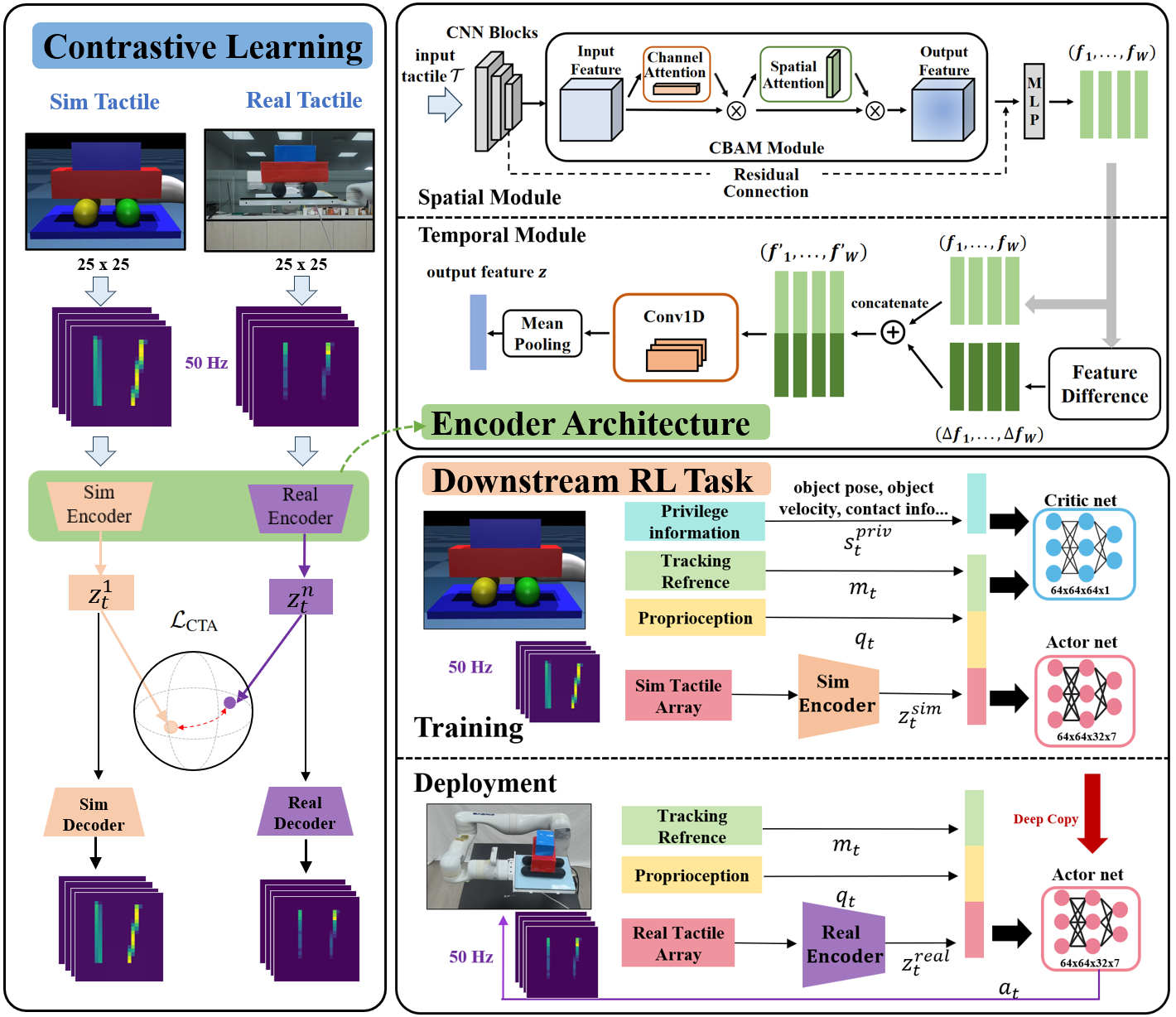

(a) Contrastive Learning Pre-training. (b) Spatio-temporal Encoder Architecture. (c) Downstream RL Task & Deployment.

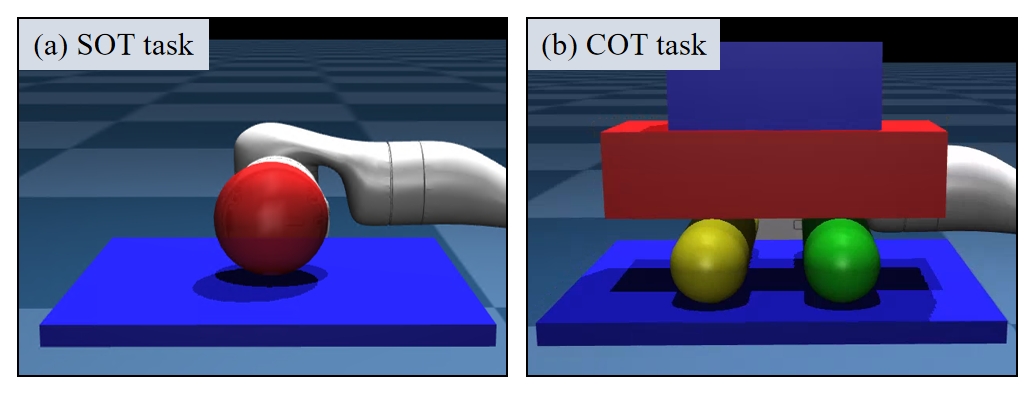

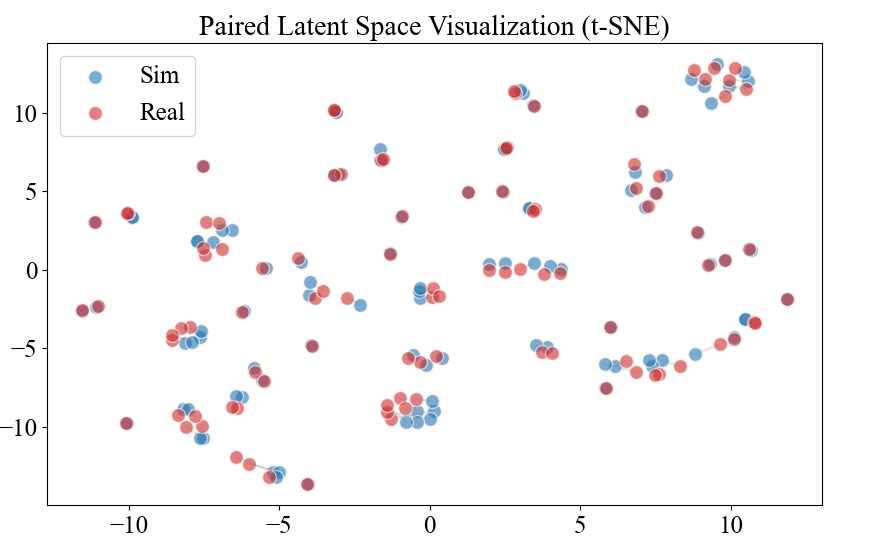

The ConTact framework addresses the sim-to-real challenge by aligning tactile data features. We first design a computationally efficient ray-casting tactile simulation model in MuJoCo MJX. Based on this, we propose a pre-training Contrastive Tactile (ConTact) framework.

As depicted above, ConTact leverages contrastive learning (using a symmetric contrastive loss \(\mathcal{L}_{CTA}\)) and a spatio-temporal encoder to align tactile features across simulated and real domains. This allows us to extract unified representations for downstream DRL tasks, eliminating the need for real-world fine-tuning.

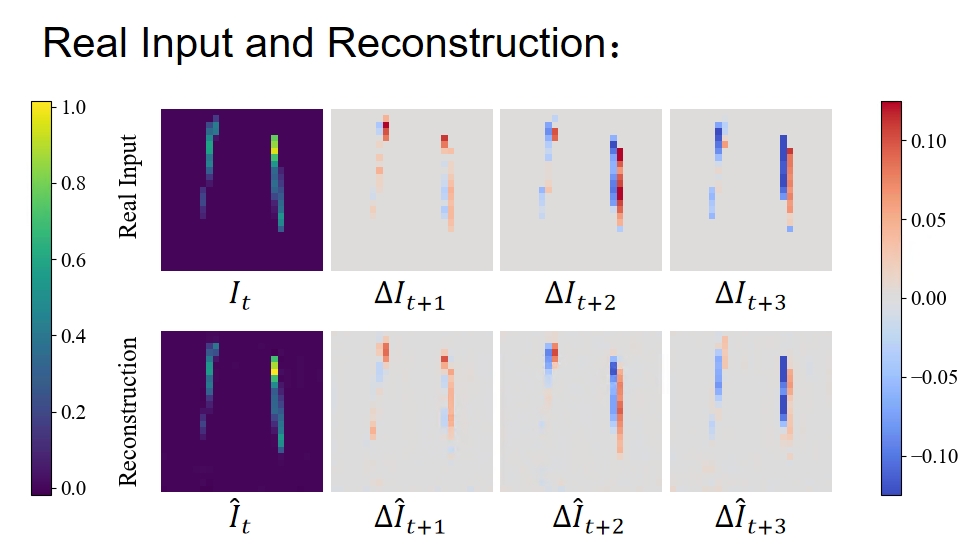

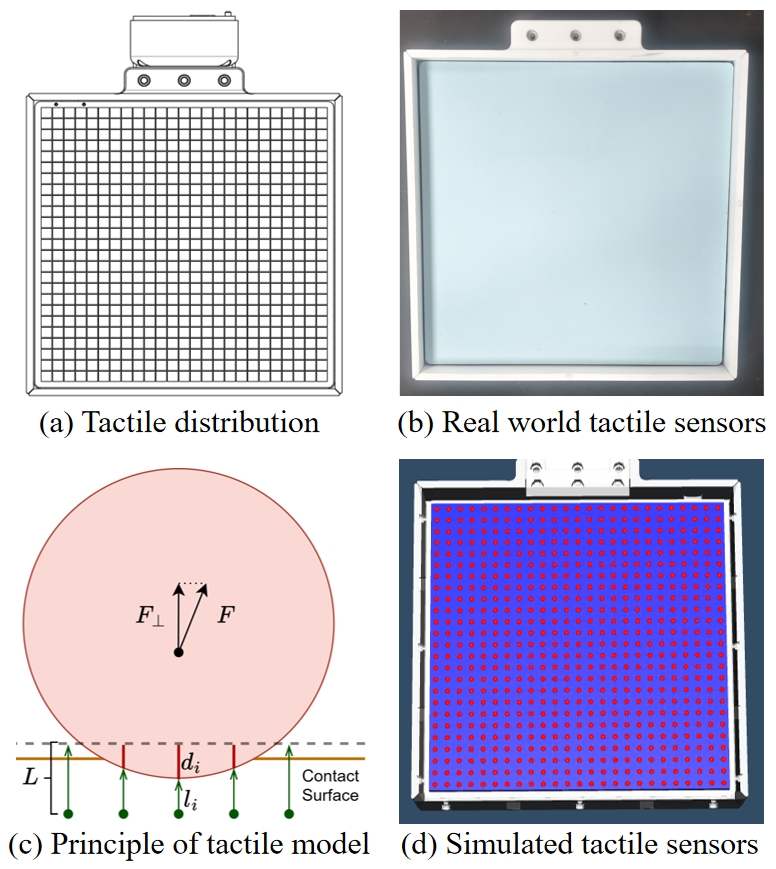

Tactile Array Simulation

Real-world vs Simulated Tactile Array